The Maker Faire week is here, and I am excited! Maker Faire is an annual event that attracts makers, students, developers, the curious minded, people of all-ages, background, and dreams with aspiration in technology, science, and the environment. A place to discover and enjoy learning what others have made. My group and I visited the site a day early as planned; Thursday morning at 10:00 am, to setup for the official Opening Day event.

We arrived at the Maker Faire Bay Area 2018 with mixed excitement; the curiosity surrounding exposing the project to the public from around the world for the first time, and the anxiety from the unknown (mostly hidden surprises). We had two visible posters describing the installation mounted outside the 10 x 10 x 10 tent, and the objects table staged on one side closer to the entrance. The stage was set to test our proposition and see whether we have enough design research and technology to provoke awareness in how people interact with each other. Within minutes of the opening of the event, people began to visit and ask questions about the installation. It was the first time we had to explain to the public what the project was about and introduce them to the interactive non-linear storytelling. Slowly, and as more people came to visit, the wait time creeped to more than 10 minutes per experience. Most people didn’t mind the wait, while others chose to come back later. People began to randomly select the personal objects from the table and went inside the tent to reveal the interactive story. The stream of people stabilized and we became comfortable as time went by. Except for a few times the video froze, there were no real technical issues to worry about. But standing on a concrete floor for 3 days (the duration of the event) wasn’t fun. We enjoyed interacting with and the responses from users. In all, over 230 people went through the experience during the 3 days period.

Results

Measuring the result of the experience posed a challenge; we had no clear system to collect and produce useful statistical data on the experiment. Thus, we are left with depending on observations, user feedback and questionnaires to form opinions of the noted results. In retrospect, each topic should have at least, 30 or more specific questionnaires with ‘before’ and ‘after’ responses, creative ways of encouraging user unsolicited impression of their experience, and auto-generated count of visitors to the tent. Albeit, the limited sample size revealed enough evidence for us to assert that the Wear My Story experiment provokes awareness that may help to mitigate how people judge people at first encounter. We are confident and on the right track.

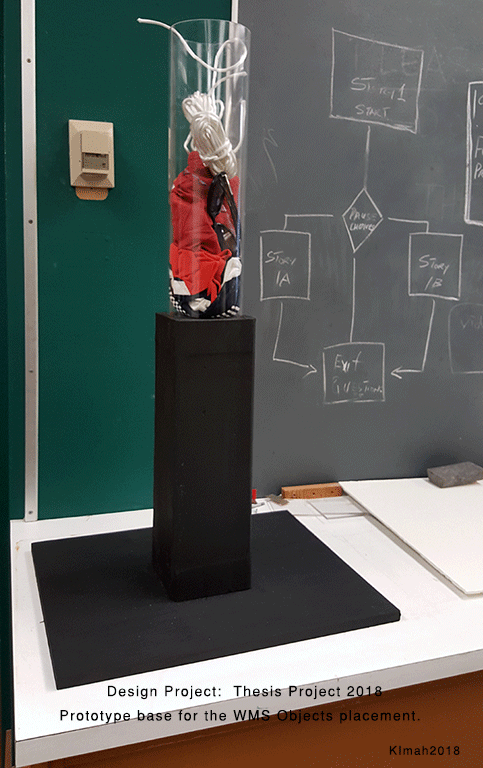

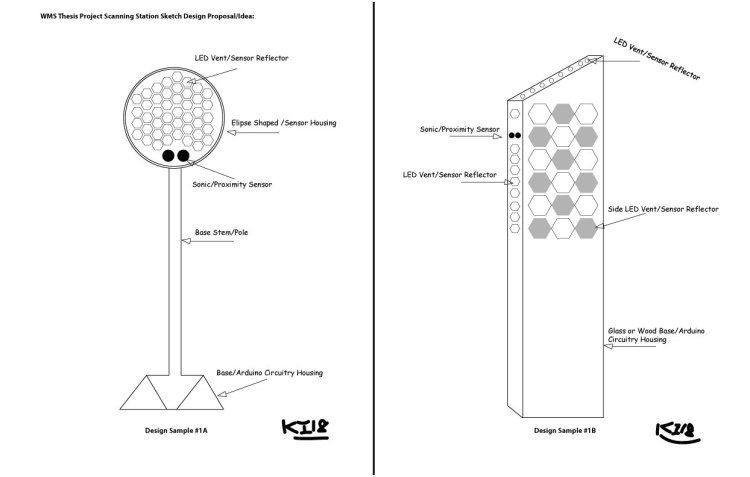

There are multiple technological devices installed inside the RFID stand: RFID Reader, Ultrasonic sensor, Arduino Microcomputer, breadboards, and USB adapters. In future notations, I’d expand on each of the sensor devices used to create the scanning station.

There are multiple technological devices installed inside the RFID stand: RFID Reader, Ultrasonic sensor, Arduino Microcomputer, breadboards, and USB adapters. In future notations, I’d expand on each of the sensor devices used to create the scanning station.